The Attribution Stack was featured in our post on Reforge: “The Attribution Stack: How to Make Budget Decisions in a Post-iOS14 World“

We’re in the media measurement space, so our inboxes and dm’s are full with requests for help navigating the murky world of marketing attribution (especially since iOS14). Every vendor promises a ‘single source of truth’ but that’s often just a nice story the sales person tells you in order to get you to sign on the dotted line – every method has its strengths and weaknesses.

We have a map in our head of the attribution landscape from years of experience with these techniques, and backgrounds in advanced statistics to help us understand the jargon. Most marketers don’t have much experience with attribution – they aren’t even aware of what methods are available – and there are few good resources available to learn from.

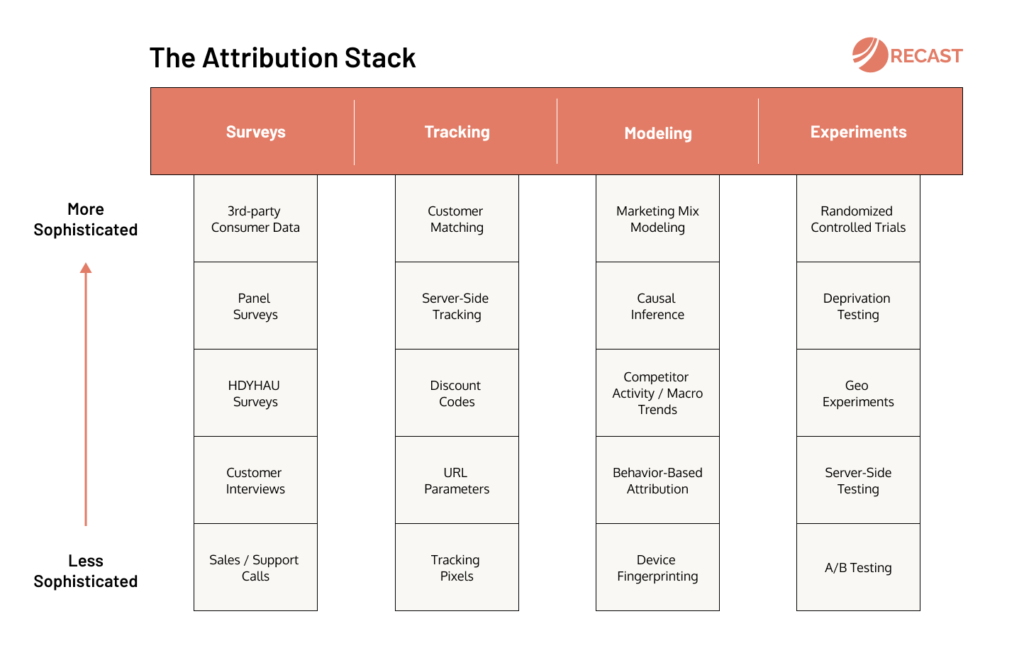

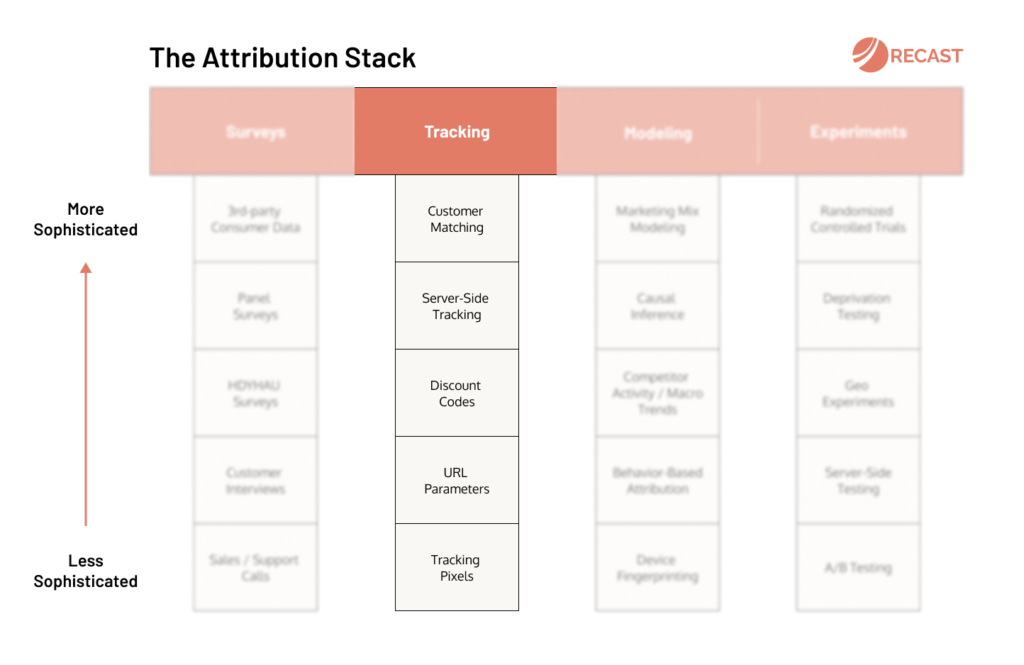

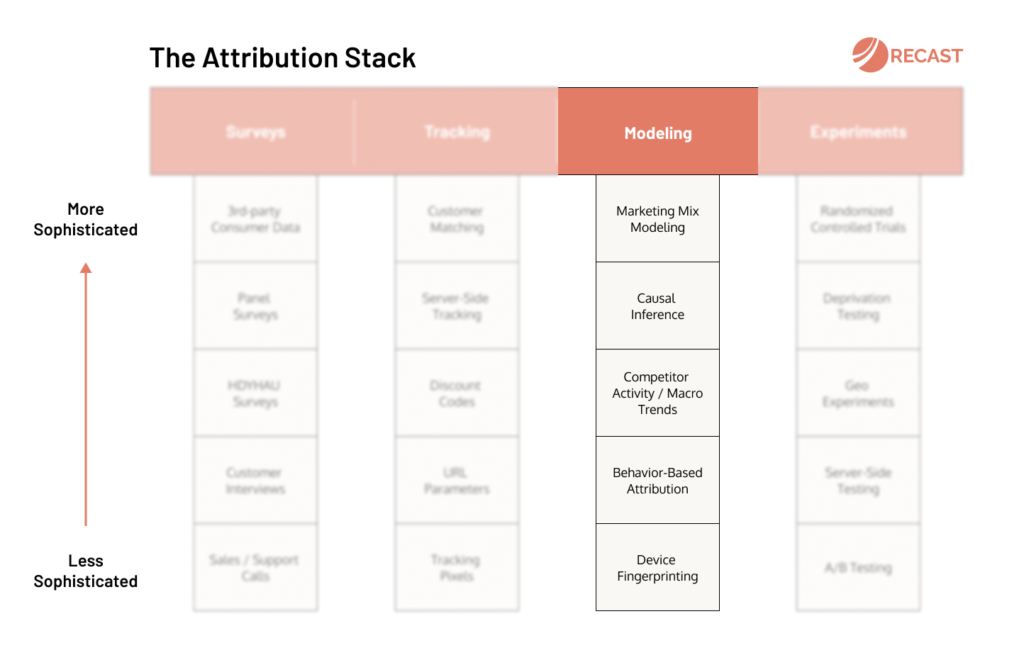

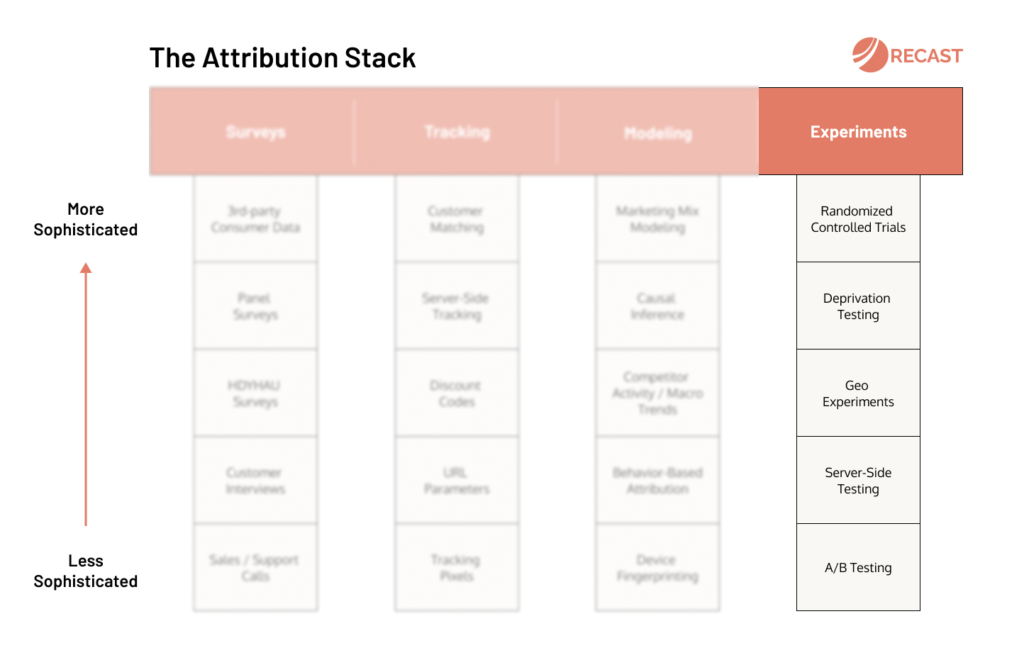

So we created The Attribution Stack: a visual map of the attribution landscape. Somewhere to send people whether they don’t know where to start, or need more sophisticated measurement than what they have now. A guide for navigating the complexities of marketing attribution.

You can see all the methods that are available for measuring the impact of marketing, and how they relate to each other, so you know where you are on your journey. We’ll cover the strengths and weaknesses of each approach, so you can make smarter decisions about what’s most going to help your company eliminate wasted advertising spend.

Every attribution method is categorized into one of the four pillars of media measurement: Surveys, Tracking, Modeling, and Experiments. Most companies combine multiple methods, as it helps to triangulate the truth from different angles.

You should work from the bottom (less sophisticated) to the top (more sophisticated) and from the left (lower traffic requirements) to the right (higher traffic requirements) as you grow. In the early days just talking to customers is enough, then when you get bigger you’ll have enough traffic to reach statistical significance in your experiments.

The way you use these methods will evolve over time: don’t expect that after the next task tracking will finally be done. It’s always changing and evolving. Eventually you’ll probably use most of these methods, so it helps to familiarize yourself with as many as possible.

These are our core beliefs, underpinning The Attribution Stack:

- There are many more methods than most people realize

- Each attribution method has its own strengths and weaknesses

- Start with one or two methods and evolve with your needs

- You’ll use most of these methods eventually as you scale

- Tracking is never done: build a culture of continuous improvement

Attribution Methods

In this section we’ll run through a broad overview of what each attribution method does, so you can understand how each of these methods works. We’ll cover the strengths and weaknesses of each technique, so you can understand if it makes sense for the specific measurement challenges you face right now in your business.

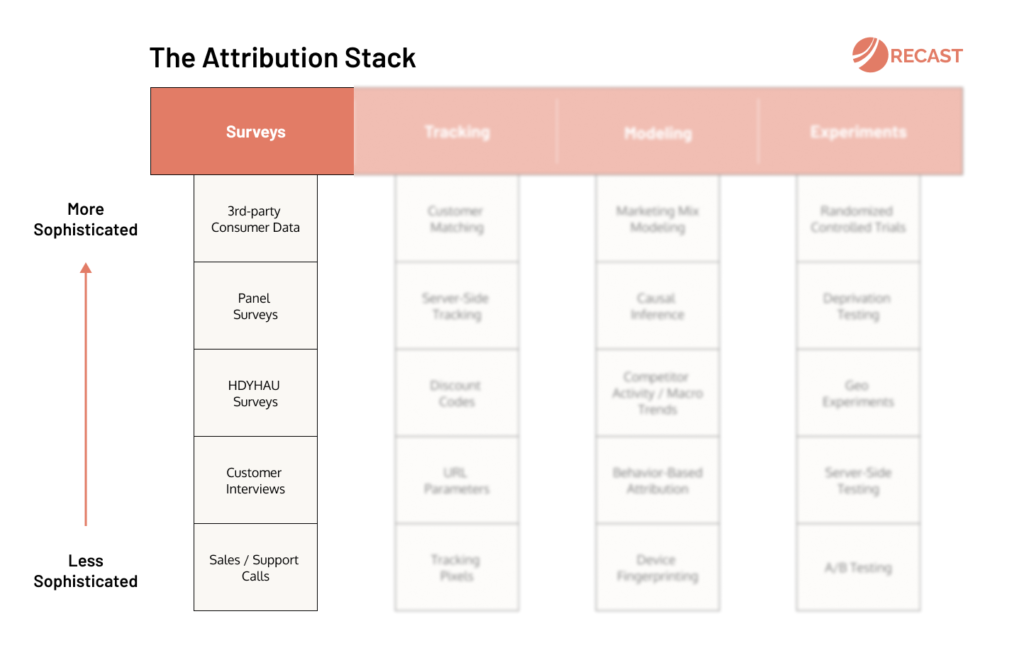

Surveys

It’s good advice to remember to talk to your users, and better advice to ask them how they heard about you. The best companies keep up the customer obsession as they grow, and graduate from asking customers directly to tracking their responses in large-scale surveys or spending analysis.

Sales / Support Calls

Ask customers directly how they found out about you on sales / customer support calls.

- Strength: easy to work into existing process

- Weakness: often inconsistently recorded

Customer Interviews

Conduct formal customer interviews and ask how they heard about this company / product.

- Strength: plenty of context given

- Weakness: time consuming

HDYHAU Surveys

Ask ‘How Did You Hear About Us’ in a survey within your checkout or registration flow.

- Strength: easy to set up and interpret

- Weakness: people lie on surveys

Panel Surveys

Track brand awareness over time by surveying a panel of people who’ve opted in to surveys.

- Strength: unique insight into long term trends

- Weakness: cost and long term commitment

3rd-party Consumer Data

Purchase transaction-level data from vendors to track customer purchase behavior.

- Strength: uniquely comprehensive insight

- Weakness: expensive and difficult to access

Tracking

Nobody stays as a last-click marketer for long. Eventually you notice that what you see in Facebook ads doesn’t match what you see in Google Analytics, and start driving hard for independent measurement. Some channels are harder to track than others, so techniques range from URL parameters to discount codes and server-side tracking of logged in users.

Tracking Pixels

Fire a tracking event when someone visits a page or completes an action on your website.

- Strength: most common method of tracking

- Weakness: biased towards the platform

URL Parameters

Add parameters to your URL, e.g. ‘utm_source’ to track the marketing source of campaigns.

- Strength: reliable and simple to use

- Weakness: not available in iOS apps or offline

Discount Codes

Give users a code unique to the campaign they can use on checkout to earn a discount.

- Strength: Ubiquitous, but reliability varies depending on the product and discount value

- Weakness: expensive (you have to give a discount!)

Server-Side Tracking

Fire tracking events on the server rather than on the website, to evade tracking prevention.

- Strength: regain control over data

- Weakness: difficult to set up, limited to digital channels

Customer Matching

Stitch user sessions across devices when they are logged in using a unique user id.

- Strength: full customer view

- Weakness: requires users to log in across multiple devices

Modeling

The user clicked on an ad: that doesn’t mean it deserves all the credit. As your marketing mix matures, your view on attribution gets more probabilistic. If you’re advertising on hard to track channels – TV, PR, Billboards – you must model conversions not simply count them.

Device Fingerprinting

Build an almost unique profile of a user’s device based on IP address and other attributes.

- Strength: gives user-level source

- Weakness: increasingly prevented by browsers

Behavior-Based Attribution

Estimate how much a user is worth based on their actions on their first visit and extrapolate.

- Strength: gives user-level values

- Weakness: garbage in, garbage out

Competitor Activity / Macro Trends

Use statistics, e.g. linear regression to estimate correlation between external trends and sales.

- Strength: easy to get useful results

- Weakness: lack of granularity

Causal Inference

Emulate a controlled experiment using natural experiments, regression discontinuity, or similar.

- Strength: doesn’t planning ahead and setup of test, can give valid reads on incrementality

- Weakness: only possible in certain situations

Marketing Mix Modeling

Use multi-variable regression (e.g. Bayesian MMM) to estimate the impact of each channel.

- Strength: works across every channel (even offline)

- Weakness: requires significant data science resource (or advanced software)

Experiments

The gold standard of measurement: the only way to prove one thing causes another. They’re difficult to set up for marketing, where we don’t control enough of the ecosystem to meet all of the requirements. A/B testing is often the first encounter, but it gets more scientific from there.

A/B Testing

Using client-side tools to randomly show users control or experiment versions of the website.

- Strength: easy for non-technical users

- Weakness: can only test surface level changes

Server-Side Testing

Test changes to back-end systems like algorithms, or page templates (useful for SEO).

- Strength: the only way to test core product changes

- Weakness: requires significant engineering resource

Geo Experiments

Split by geographical region and randomly expose a share of the regions to a change.

- Strength: reliable results

- Weakness: complex to set up

Deprivation Testing

Switch it off, switch it on again, or run a placeholder (i.e. charity ad) and compare the difference.

- Strength: possible on every channel

- Weakness: risks harming performance, getting “statistical significance” can be a challenge for smaller channels

Randomized Controlled Trials*

Randomly assign control and test groups and serve the change being tested to calculate lift.

- Strength: gold standard in measurement

- Weakness: not available in most platforms

*Note: technically A/B tests, server-side testing and deprivation testing are all attempts at a randomized controlled trial (RCT), and modeling techniques like causal inference attempt to emulate controlled experiments. However in practice they often fall short of one or more of the requirements. We reserve this label for sophisticated lift testing, often administered at the advertising or marketing platform level where users are logged in and comprehensive control of the ecosystem can be exercised to meet scientific requirements.

Triangulating the Truth

With so many different methods you’re going to encounter differences of opinion. Remember that no one method has the right answer, their findings should be seen as clues that help a detective solve a case.

The first thing to strive for is internal consistency. If Facebook reports 50% more conversions than Google Analytics, investigate why that is. Once you have a good understanding you can decide which vendor to side with. Tracking down anomalies is an important part of the job.

Second, you want to use one method to calibrate another. For example if your marketing mix model disagrees with the results of a lift study you ran, that’s a sign the model needs more work. If your model says Google ads is only 40% incremental, multiply conversions by 0.4 in reports.

Finally, marketing attribution is not just about where to allocate budget, it’s about what to do. Every attribution method tells you something about your customer and their behaviors. Survey responses can give you ideas for experiments, and experiments can dispel long-held myths.

Every attribution method has strengths and weaknesses, and their champions always have an agenda. There will never be such a thing as a ‘single source of truth’, nor would you want to trust a single source of information with something as important as your marketing strategy. We’re all marketing scientists now, and organizations which embrace that will have an edge.